About App-V Scheduler

In short: the vision of App-V Scheduler is to reduce complexity and give you visibility and control over your App-V 5 deployment. If you want to learn more about the history of App-V Scheduler, please read more about the previous releases here and here.

What’s new in App-V Scheduler 2.2

Today we are excited to announce the latest version of App-V Scheduler which adds a lot of new features and improvements, in this post we will walk through the new features in version 2.2.

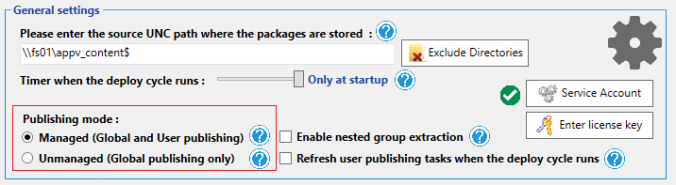

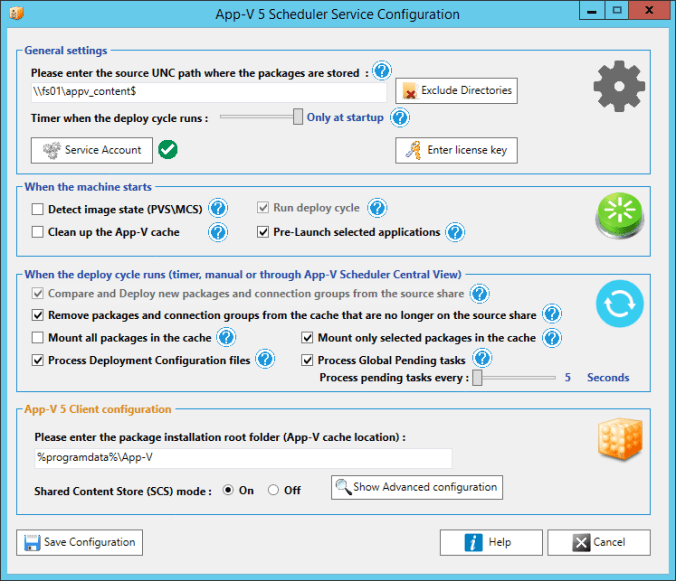

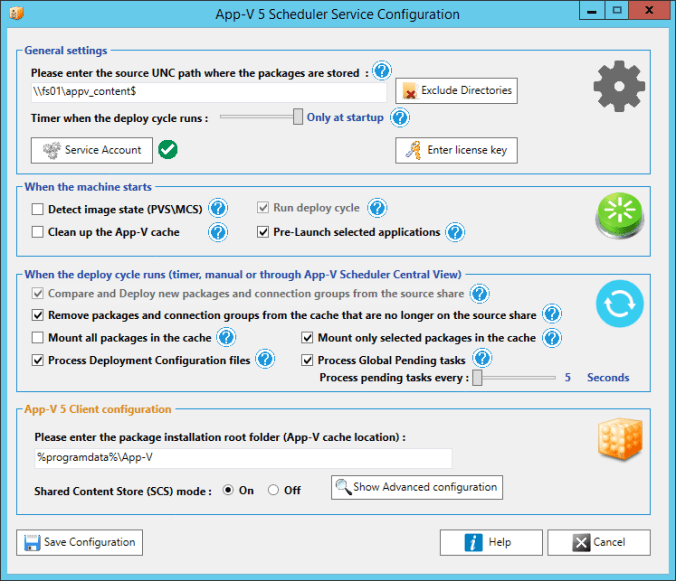

Lets start with the updated service configuration window:

As you can see the configuration is now grouped which makes it much more transparent and easier to understand how the service works. The configuration is split in 2 main events:

Machine boot event

Here you can configure what should happen when the machine (re)boots, for example if the App-V cache should be cleared to always start with a fresh cache or if App-V Scheduler should detect the image state to see if the image is in read-only or read\write mode. The deploy cycle will always be triggered directly when the machine boots to make sure all packages are present when the users log in to the system. The application pre-launch functionality allows you to start selected (virtual) applications to improve the initial launch time of applications for the first users logging in to the system.

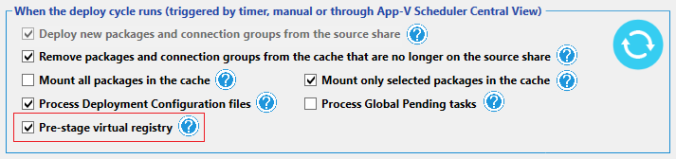

Deploy cycle event

One of the main tasks of the deploy cycle is to handle the deployment of new packages and connection groups by comparing which ones are already present in the cache with the ones on the source share. The deploy cycle can be triggered in three ways:

Lets summarize the new features that are now part of the deploy cycle:

Exclude configured directories on the source share

You can now select certain directories from the content share which should be excluded by the deploy cycle, for example this allows you to archive your old package versions in a directory called archive. But this option is also very useful when you use multiple machine groups looking at the same content share. For example they can share the same folders with common packages and you can configure Machine group A to exclude Folder B and configure Machine group B to exclude Folder A. This allows you to create a very flexible App-V deployment structure. You can configure excluded directories in the general settings of App-V Scheduler (see the above service configuration screenshot).

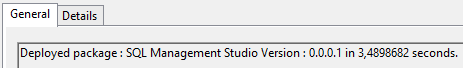

Timing information

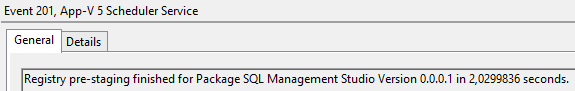

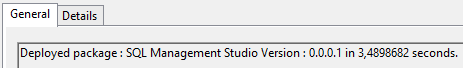

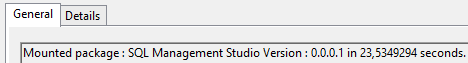

An important part of your App-V 5 deployment is a good understanding on how much time it takes to deploy packages so you can plan accordingly. For example if you use a non-persistent image technology like Citrix MCS\PVS and want to load all packages when the machine boots in read-only mode, it is important to know how much time this process takes. App-V Scheduler 2.2 will exactly tell you how long the deploy cycle took to finish, but will now also show you the timing information per package. For example you can see how long it took to deploy a package:

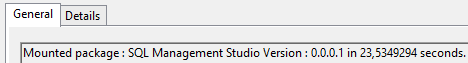

And if you configured the package to mount (pre-cache) inside the cache, it will also show you the time this process took per package:

And if you configured the package to mount (pre-cache) inside the cache, it will also show you the time this process took per package:

Besides timing information, App-V Scheduler logs every action in it’s own event log source in a very readable manner, this will not only make troubleshooting easy but also gives you a good understanding on how your App-V deployment is operating.

Support for deployment configuration files

After you created (sequenced) the package and want to make certain modifications to the package configuration when it gets deployed, you can make use of the deployment configuration file. We recommend to use the ACE utility from Virtual Engine which makes it very easy and transparent to modify the deployment configuration file. After you modified the file save it in the same folder as the package with the “Save as App-V Scheduler configuration file” option in ACE. This will save the file with the .appd extension and App-V Scheduler will process the configuration automatically for you when the package is added to the machine.

Remove packages and connection groups from the cache that are no longer on the source share

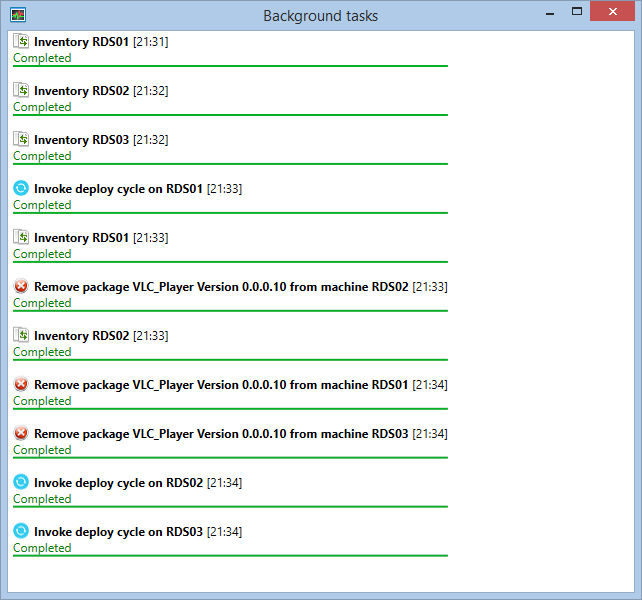

The deploy cycle can now automatically remove packages and connection groups from the App-V cache that are no longer on the source share. For example when you remove or archive older versions of a package, App-V Scheduler makes sure it also gets removed from the cache on the App-V client. This feature will make sure your cache is always in balance with the content on the source and is especially useful in persistent use cases where you don’t flush the whole cache when the machine boots but want to keep control of the cache size. This feature also allows you to drain a package :

Drain and exclude package option

Maybe this sounds familiar: You want to remove a package from the source share but the moment you try to move or remove the .appv file you receive the message that the action cannot be completed because the file is currently in use. There are different reasons why this happens, for example you use SCS mode where the App-V client on the machine keeps the appv file on the share open because it directly reads data from it. But this can also happen when you use the default stream option in App-V 5 where the package is streamed to the client on-demand. This can be very annoying when you want to remove or archive old packages but it won’t allow you. With this new option you can now select a package and simply select the drain and exclude package option. When this option is selected 2 things will happen when the deploy cycle runs:

- The deploy cycle will skip the package to prevent it from deploying again

- The “remove packages that are no longer on the source share” feature will believe the package is no longer on the share and remove it from all the machines in the group

Now that the package is drained you can easily archive or move the package to another location, all by using only one click.

This feature also allows integration with other products like AmberReef, which can automate the whole packaging, test and release workflow for you. For more information about this integration check out the better2gether video.

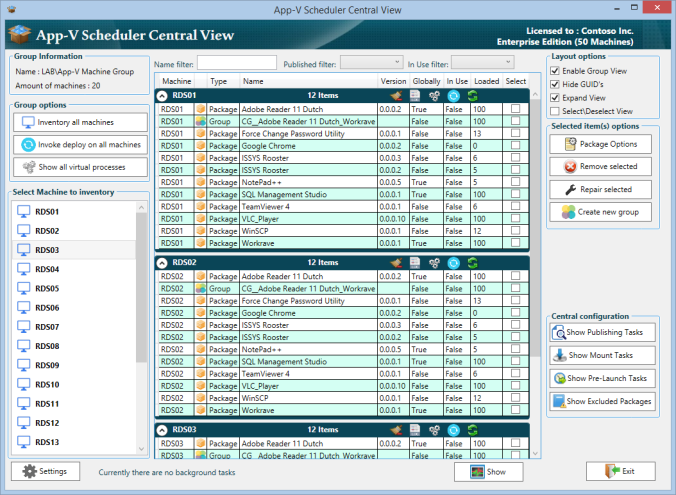

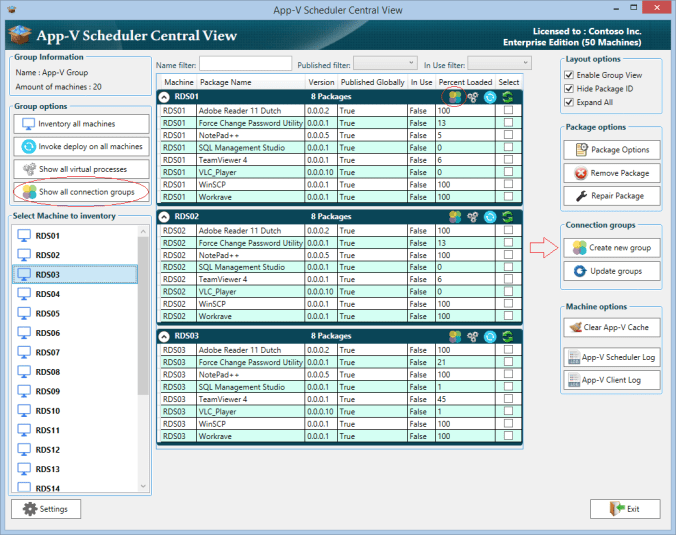

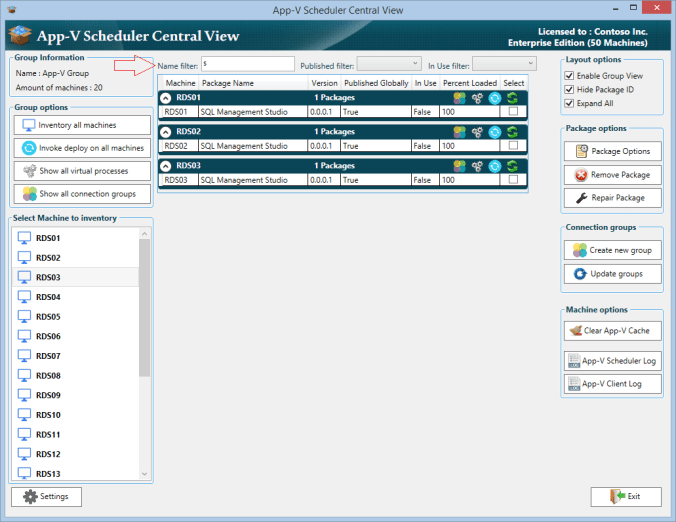

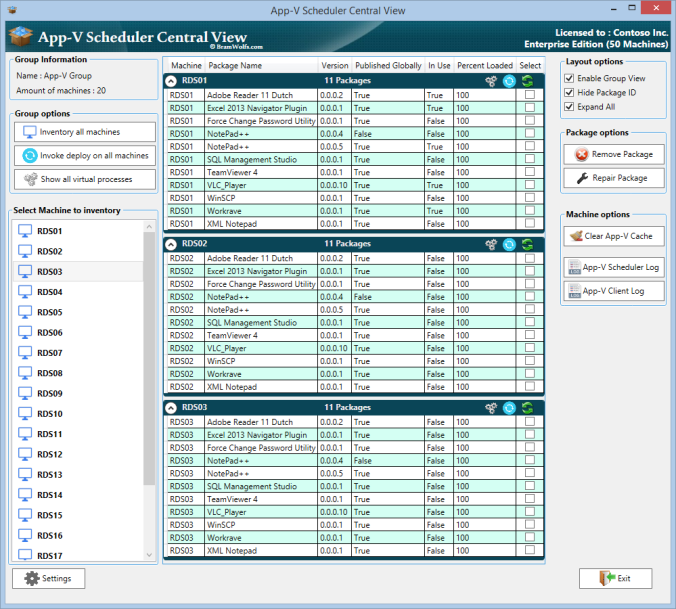

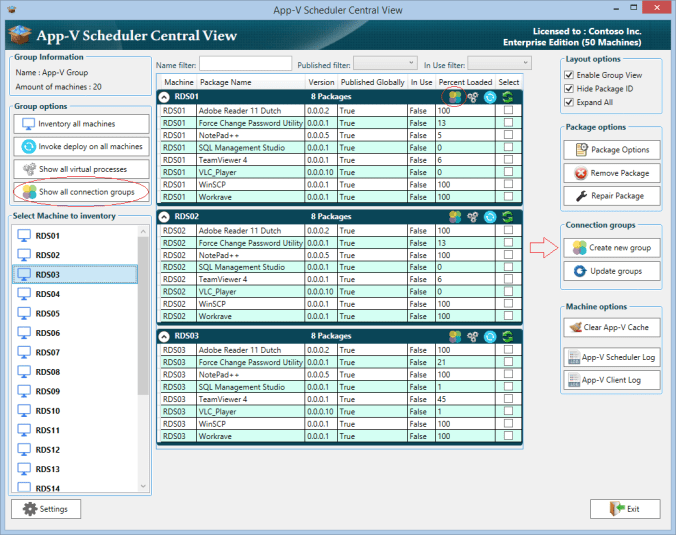

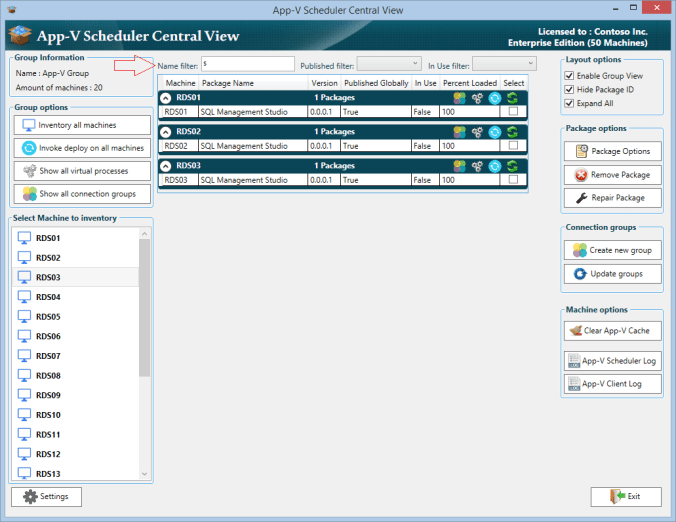

New features in the App-V Scheduler Central View console

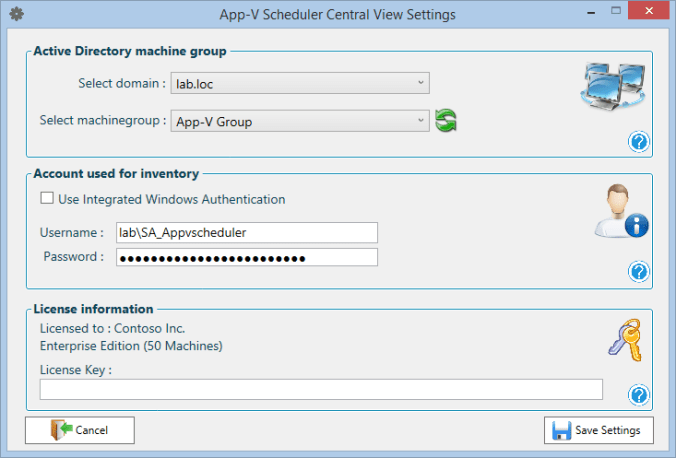

If you don’t know App-V Scheduler Central View yet, it’s the center piece of your App-V Scheduler deployment. Central View is a lightweight management console which gives you real-time insight and control in your App-V 5 deployment. Central View doesn’t need a dedicated server and can be very easily deployed as App-V package along side the rest of your management tool set. Lets run down the new features in Central View:

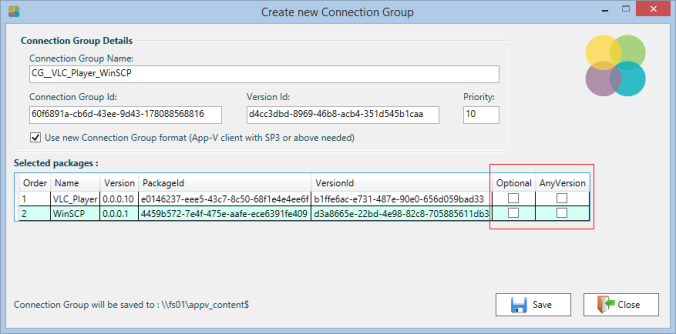

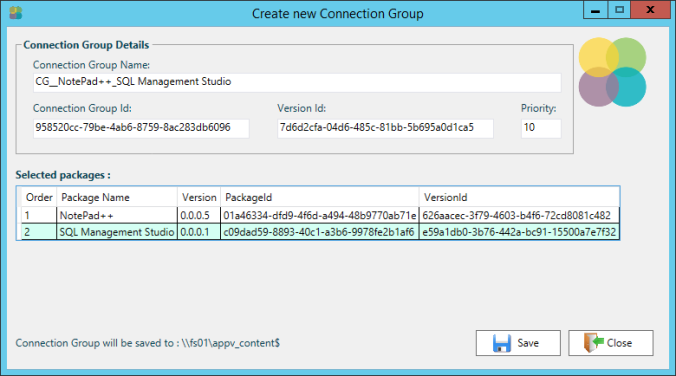

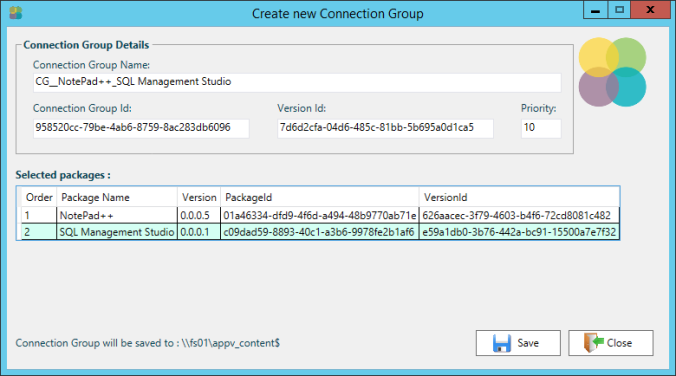

It’s now possible to create and manage connection groups directly from Central View :

Deploying a connection group is very easy : Select 2 or more packages and click on new connection group then optionally change the priority and the name and click on save. It will now be saved automatically to your content share where the deploy cycle will pick it up and deploy it for you. Below a screenshot of the new connection group window :

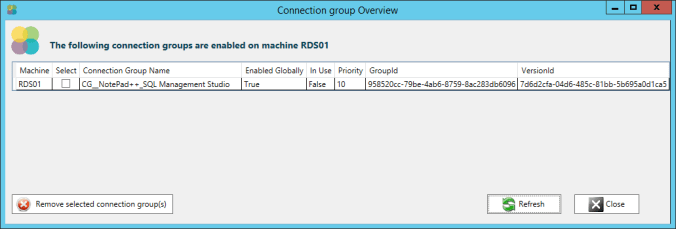

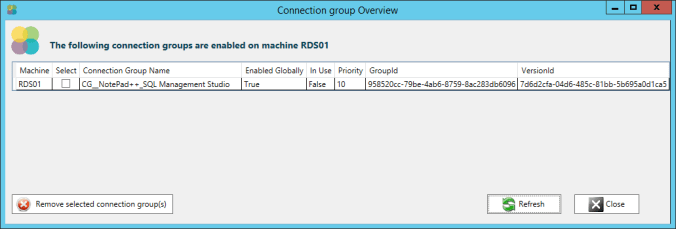

Central View allows you to control all machines in a machine group at once or on individual machines by clicking on the icons in the machine header. It’s for example very easy to invoke a deploy cycle on all machines at once or only a selected machine. This is also the case for connection groups, you can view the deployed connection groups on all machines in a single view or only the connection groups on a selected machine:

Central View allows you to control all machines in a machine group at once or on individual machines by clicking on the icons in the machine header. It’s for example very easy to invoke a deploy cycle on all machines at once or only a selected machine. This is also the case for connection groups, you can view the deployed connection groups on all machines in a single view or only the connection groups on a selected machine:

You can also select and remove connection groups directly from here.

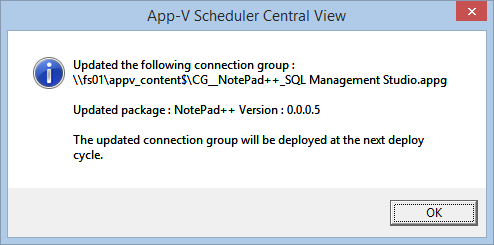

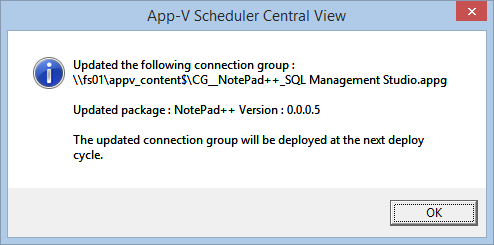

The update connection groups option, allows you to update existing connection groups on the source share so you don’t need to manually edit or create new ones. It is especially useful if you update a package which is part of an existing connection group, the only thing you have to do is deploy the updated package, select it and click on the update groups button:

Central View now has real-time filters to quickly see all packages that are in use or to easily search for a package, for example if you type the first letter of a package the view is filtered in real-time:

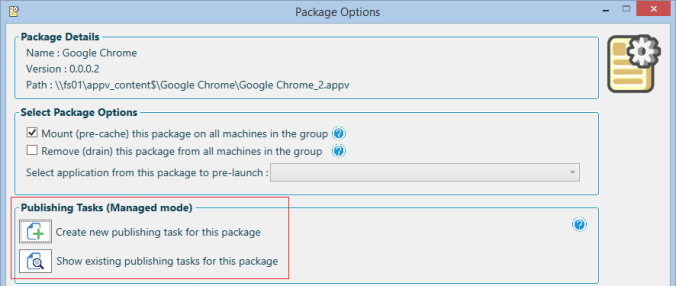

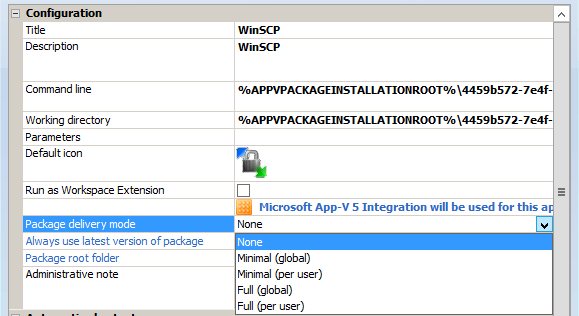

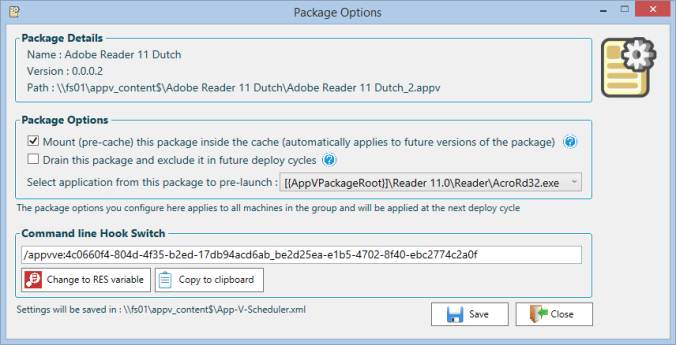

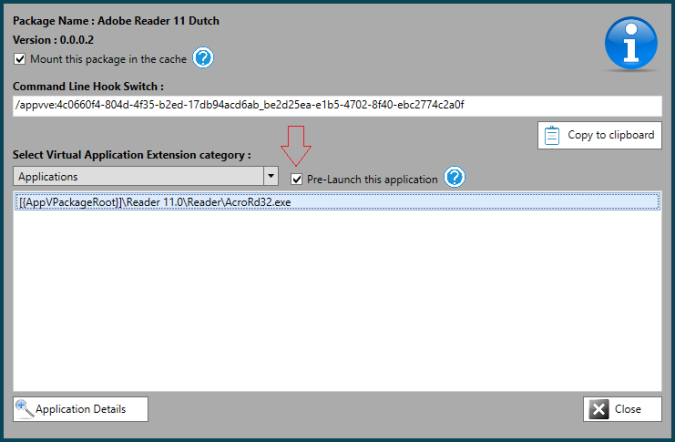

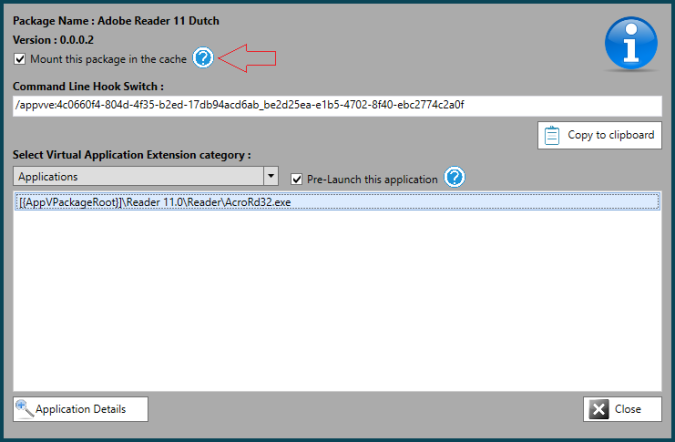

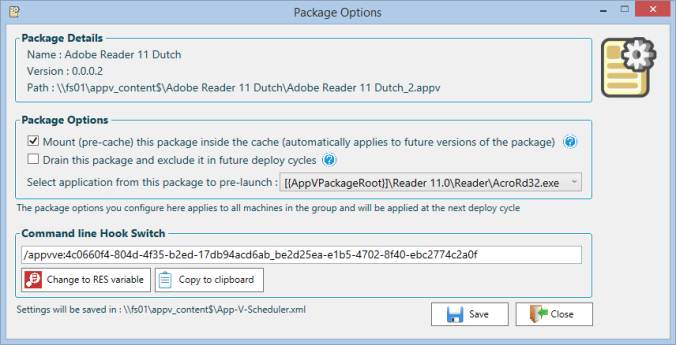

The package options have been moved from the App-V Scheduler GUI on the machine itself to the Central View console to give a more central configuration experience. Simply select a package and click on package options :

Here you can configure if the package should be fully mounted inside the cache, or if you want to drain and exclude the package. Also you can select an application entry from the package to pre-launch to make sure the virtual environment is already loaded one time before your users log in to the system improving the user experience. It also gives you a quick overview of the package details and access to the command line hook switch which you can use as parameter for native applications to run inside the virtual environment of the package.

A few examples of validated App-V Scheduler deployments

The following examples can give you some insight into how App-V Scheduler can be configured. This configurations are validated to work, but they are only intended to give you an idea of the possibilities. They can be used as guide line but are not written down here to serve as best practice or recommended configuration.

Example of App-V Scheduler deployment in combination with non-persistent machines (like MCS\PVS)

- Move the App-V Cache to a persistent drive (for example the same one as the write-cache)

- Configure App-V Scheduler to detect the image state (don’t deploy packages when in read\write mode)

- Configure App-V Scheduler to clean the cache after reboot (needed because drive is persistent)

- Configure App-V Scheduler to remove packages that are no longer on source share (keep cache in balance during run-time and easily allows draining of packages)

- Use SCS mode in combination with the mount specific packages option (mount packages that either perform better when fully cached or if you want them to be higher available) the combination gives you the best of both worlds

- Set the deploy cycle timer to manual and use Central View to invoke the deploy cycle remotely. You can do this on a test machine first. After tests are performed and application functionality is verified invoke the deploy cycle on the production machines (by selecting the invoke deploy cycle on all machines in the group option)

Example of App-V Scheduler deployment in combination with persistent machines

- Keep the App-V cache on the default location

- Don’t clean the cache at machine reboot

- Configure App-V Scheduler to remove packages that are no longer on source share (keep cache in balance with source)

- Use SCS mode in combination with the mount specific packages option (mount packages that either perform better when fully cached or if you want them to be higher available) the combination gives you the best of both worlds

- Set the deploy cycle timer to manual and use Central View to invoke the deploy cycle remotely. You can do this on a test machine first. After tests are performed and application functionality is verified invoke the deploy cycle on the production machines (by selecting the invoke deploy cycle on all machines in the group option)

For more information about supported App-V 5 cache combinations with App-V Scheduler, please read the Administrator guide which is part of the download.

What’s next for App-V Scheduler

New features are added frequently to App-V Scheduler and feature requests are always welcome!

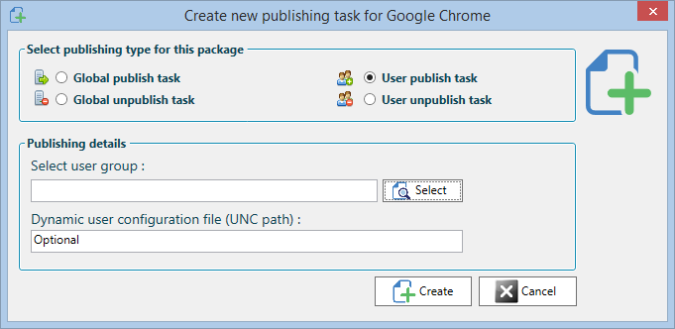

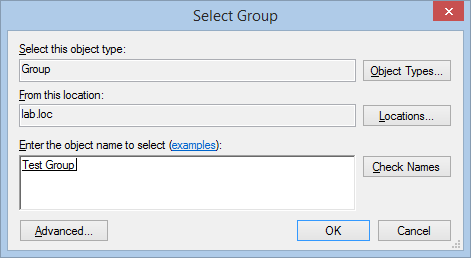

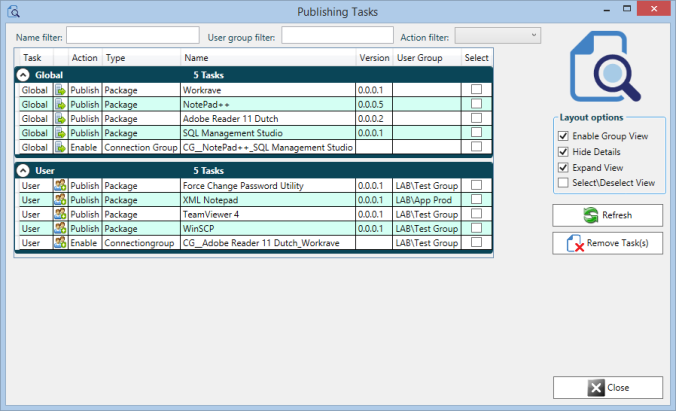

For the next release we are also adding user publishing support in App-V Scheduler. The current versions of App-V Scheduler are based on global deployment, this means the package is published on a machine level. When you use global publishing you need to manage application access for example with GPO’s or UEM tools just like they where installed natively on the machine. User publishing is also often used to control application access by only publishing an application to a group of users, this is a good way to separate application access if you don’t use UEM tools for example. App-V Scheduler will also support user publishing in combination with global publishing so you can get the best of both worlds.

Availability

App-V Scheduler 2.2 is now available on the App-V Scheduler website.

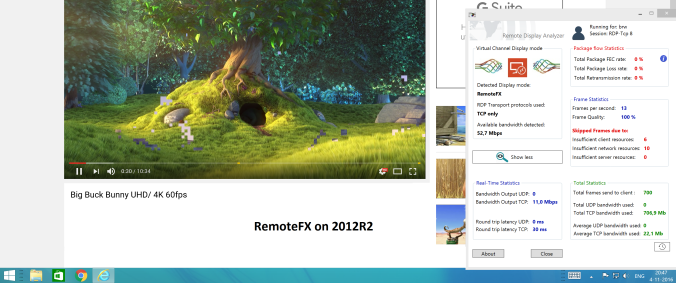

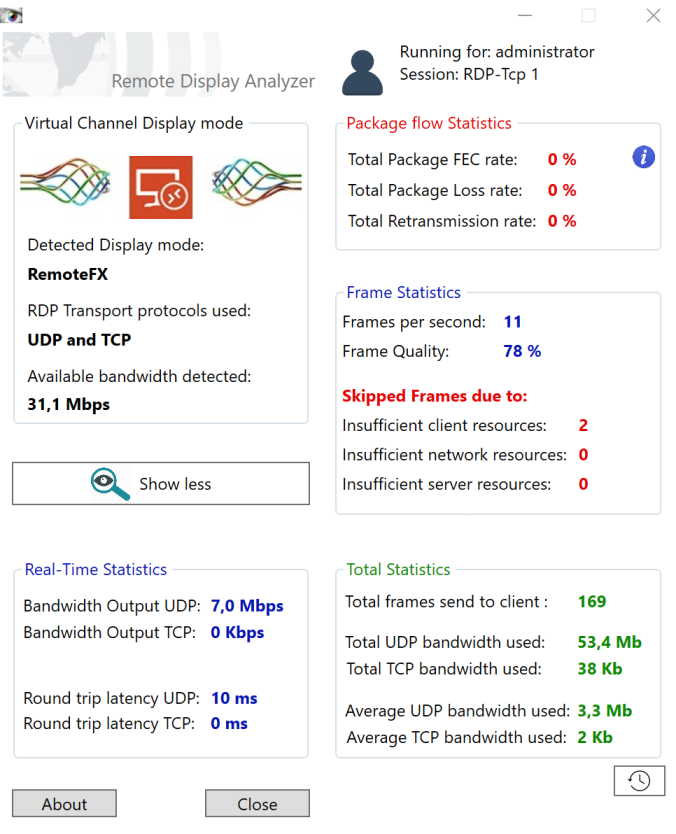

To give a short introduction I will shortly describe the available information in Remote Display Analyzer (RDA) for RDP:

To give a short introduction I will shortly describe the available information in Remote Display Analyzer (RDA) for RDP: