In this blogpost I want to share some experiences and practical tips when using DFS-R to sync local VDisk stores between multiple Provisioning Servers. Lets begin with a quick intro :

In this blogpost I want to share some experiences and practical tips when using DFS-R to sync local VDisk stores between multiple Provisioning Servers. Lets begin with a quick intro :

If you are streaming your VDisks from the Provisioning Servers local storage, you often want to replicate the stores to other Provisioning servers to provide HA for the VDisks and target connections. I’m a big fan of running VDisks from local storage because :

– No CIFS layer between the Provisioning Servers and the VDisks, increasing performance and eliminating bottlenecks

– No CIFS single point of failure

– No expensive clustered file system needed to provide HA for the VDisks

Caching on the device local harddrive or in the device RAM is the best option when using local storage for your VDisk store, this way you can easily load balance and failover between Provisioning Servers. Of course there are some down sides when placing the VDisks on local storage :

– Need to sync VDisk stores between Provisioning Servers, resulting in higher network utilization during sync

– Double storage space needed

– Not an ideal solution when using a lot of private mode VDisks (VDisk is continuous in use and cannot sync). Luckily we now have the Personal VDisk option in XenDesktop so IMHO private VDisks aren’t really necessary anymore in a SBC or VDI deployment.

Because one size doesn’t fit all, you can always mix storage types for storing VDisks depending on your needs, but for Standard mode images combined with caching on the device hard drive or device RAM using the local storage of the Provisioning Server is a good option.

Since Provisioning Server version 6 Citrix added functionality regarding versioning, you can now also easily see if the Provisioning servers are in sync with each other, but you have to configure the replication mechanism yourself. I have worked with a lot of different replication solutions to replicate the VDisks between provisioning servers, from manual copy to scripts using robocopy and rsync running both scheduled and manual. Lately I use DFS-R more and more to get the job done. Because DFS-R provides a 2-way (full mesh) replication mechanism, it’s a great way to keep your VDisk folders in sync, but there are some caveats to deal with when using DFS-R. Below I will give you some practical tips and a scenario you can run into when using DFS-R :

Last Writer wins

This is one of the most important things to deal with, DFS-R uses the last writer wins mechanism to decide which file overrules others. It’s a fairly easy mechanism based on time stamps : whoever changes the file last wins the battle and will synchronize to the other members, it will overwrite existing (outdated) files!

If you hold this mechanism against the mechanism how Provisioning Server works you will quickly run into the following trap :

Imagine you’re environment looks like the image below.

Step 1:

Because you want to update the image, you connect to the Provisioning console on PVS01 and create a new VDisk version, this will create a maintenance (.avhd) file on PVS01. Because this file is initially very small it will quickly replicate to the other Provisioning servers.

Step 2:

You spin up the maintenance VM, at this point you don’t know from which Provisioning server the maintenance VM will boot (decided based on load balancing rules), so let’s say it boots from PVS03.

You make changes to the maintenance image and shut it down.

Now the fun part is going to start! Based on the changes you made and the size of the .avhd file, it can take some time to replicate the updated file to the other Provisioning Servers.

Step 3:

In the meantime, still connected to PVS01, you promote the VDisk to test or production.

When you promote the VDisk, the SOAP service will mount the VDisk and make changes to it for KMS activation etc.

Step 4:

You boot a test or production VM from the new version, and you don’t see you’re changes, further more they are lost!

What happened? Well you ran into the last writer wins mechanism trap of DFS-R :

The promote takes place on the Provisioning Server which you are connecting to, so in the example this is PVS01. PVS01 doesn’t have the updated .avhd from PVS03 yet, so you promoted the empty .avhd file created when you clicked on new version.

Because the promote action updates the time stamp of the .avhd file, it will replicate this file to the other Provisioning server (again quick because it’s empty) overwriting the one with your updates.

Here are 2 options how you can work around this behaviour :

Option 1 :

After you make changes wait till the replication is finished (watch the replication tab in the Provisioning console) promote the version when every Provisioning Server is in sync.

Option 2 :

If you can’t wait connect the console to the Provisioning Server where the update took place, promote the new version there, so you are sure you promote the right .avhd file

Below I will give some other practical tips when using DFS-R to replicate your VDisk stores.

1. Ensure you have always enough free space left for staging files and make your staging quotas big enough to replicate the whole VDisk (1,5 time the VDisk size for example)

2. Create multiple VDisk stores for your VDisks, this allows you to create multiple DFS-R replication folders, replication works better with multiple smaller folders then a very large one

3. Watch the event viewers for DFS-R related messages, DFS-R logs very informative events to the event log, keep an eye on high watermark events and other events related to replication issues

4. Check the DFS-R backlog to see what’s happening in the background and to check that there are no files stuck in the queue, you can use the dfsrdiag tool to watch the backlog, for example :

dfsrdiag backlog /receivingmember:PVS03 /rfname:PVS_Store_01 /rgname:PVS_Store_01 /sendingmember:PVS01

5. Exclude lok files from being replicated, they should not be the same on every Provisioning Server

6. Plan big DFS-R replica traffic during off-peak hours, when DFS-R is replicating booting up your targets will be slower, you can also limit the bandwidth used for DFS-R replica traffic

7. Before you start check your Active Directory scheme and domain functional level, if you want to use DFS-R your Active Directory scheme must be up-to-date and support the DFS-R replication objects. Also note that only DFS-R replication is necessary, no domain name spaces are needed.

Conclusion

I can be very short here, my conclusion is that DFS-R can be a very nice and convenient way to keep your VDisk stores in sync, but you must understand how DFS-R replica works and how it behaves when combined with Provisioning Server. Hopefully this blog post gave you a better understanding when using DFS-R in combination with Provisioning Server and keep above points in mind when you consider using DFS-R as the replication mechanism for your VDisk stores.

Please note that the information in this blog is provided as is without warranty of any kind.

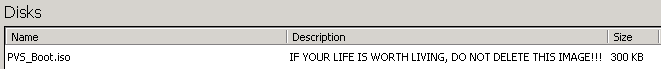

Spinning up your Provisioning Services Environment

Spinning up your Provisioning Services Environment